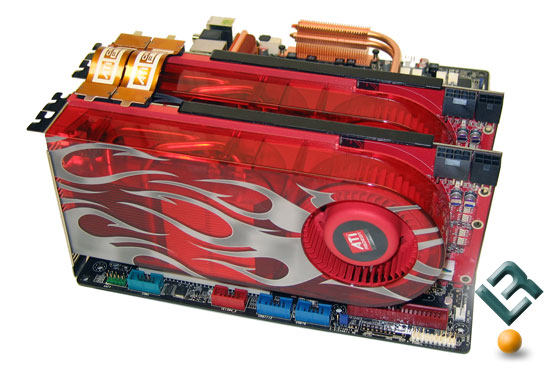

ATI Radeon HD 5800

As many of you know, today is the day ATI lifts the curtain on its latest generation of desktop based graphics cards, with two models kicking things off in the HD 5850 and top end single GPU offering, the HD 5870. In the near future we'll see others added to the lineup with the most anticipated model being the dual-GPU based HD 5870 X2, all of these being the first DirectX 11 supporting cards to hit the market.

AMD today launched the most powerful processor ever created, found in its next-generation graphics cards, the ATI Radeon™ HD 5800 series graphics cards, and the world’s first and only to fully support Microsoft DirectX® 112, the new gaming and compute standard shipping shortly with Microsoft Windows® 7 operating system. Boasting up to 2.72 TeraFLOPS of compute power, the ATI Radeon™ HD 5800 series effectively doubles the value consumers can expect of their graphics purchases, delivering twice the performance-per-dollar of previous generations of graphics products.3 AMD will initially release two cards: the ATI Radeon HD 5870 and the ATI Radeon HD 5850, each with 1GB GDDR5 memory. With the ATI Radeon™ HD 5800 series of graphics cards, PC users can expand their computing experience with ATI Eyefinity

multi-display technology, accelerate their computing experience with ATI Stream technology, and dominate the competition with superior gaming performance and full support of Microsoft DirectX® 11, making it a “must-have” consumer purchase just in time for Microsoft Windows® 7 operating system.

“With the ATI Radeon HD 5800 series of graphics cars driven by the most powerful processor on the planet, AMD is changing the game, both in terms of performance and the experience,” said Rick Bergman, senior vice president and general manager, Products Group, AMD. “As the first to market with full DirectX 11 support

How well does the new HD 5870 perform against the still solid performing older generation HD 4890 and competing models from NVIDIA? You can find this out in our fresh review of Sapphire's stock model which has just gone live thanks to the NDA lift.

Also watch out for several other articles over the next day or so including two of these bad boy HD 5870s in Crossfire later on tonight followed by an overclocking article.

Designed and built for purpose: Modeled on the full DirectX 11 specifications, the ATI Radeon HD 5800 series of graphics cards delivers up to 2.72 TeraFLOPS of compute power in a single card, translating to superior performance in the latest DirectX 11 games, as well as in DirectX 9, DirectX 10, DirectX 10.1 and OpenGL titles in single card configurations or multi-card configurations using ATI CrossFireX™ technology. When measured in terms of game performance experienced in some of today’s most popular games, the ATI Radeon HD 5800 series is up to twice as fast as the closest competing product in its class.5 allowing gamers to enjoy incredible new DirectX 11 games – including the forthcoming DiRT™2 from Codemasters, and Aliens vs. Predator™ from Rebellion, and updated version of The Lord of the Rings Online™ and Dungeons and Dragons Online® Eberron Unlimited™ from Turbine – all in stunning detail with incredible frame rates.

Generations ahead of the competition: Building on the success of the ATI Radeon™ HD 4000 series products, the ATI Radeon HD 5800 series of graphics cards is two generations ahead of DirectX 10.0 support, and features 6th generation evolved AMD tessellation technology, 3rd generation evolved GDDR5 support, 2nd generation evolved

There's a stack of other coverage on the new 5800 family of Radeon cards showing up around the web, which you can get to via the below links :-